Technology has evolved to the point where we now have artificial intelligence that can write amazing essays. AI-written essays are turning out to be exceptional in quality and astonishingly human-line in nearly every aspect. The most striking feature of these AI-powered essay generators is that they can produce top-notch essays in minutes!

It is quite natural for us humans to wonder how artificial intelligence can do so. After all, aren’t humans the most intelligent entities on Earth?! If you are a student looking to get your essay written with online help from experts, you would want to know how a machine wrote better than you, wouldn’t you?

Generative AI is the category of AI that can produce essays, blogs, and any written content in a flash. There are several generative AI models, but one of the most renowned AND most powerful is GPT. GPT, or Generative Pre-trained Transformer, is a deep learning neural network model that shot to fame in 2022 due to its ease of use & astonishing capabilities. Incredibly powerful, GPT-based tools and platforms generate stellar essays, analyze literature, write programs, solve math problems, and do much more.

This article looks at the processes that make GPT such a powerful essay and content generator.

What is GPT?

GPT is a generative AI currently the most popular proponent of the natural language generation field. An acronym for Generative Pre-trained Transformers, GPT employs deep learning neural networks to understand and process data to generate content in natural languages.

We have to dwell on the technicalities individually to understand how it does so.

-

Natural Language Generation

Natural language generation (NLG) is a sub-branch of natural language processing, a branch of AI & computational linguistics that dabbles into natural human languages. NLG is focused on generating natural human language content. The field is also a subset of generative AI, a type of technology concerned with designing & developing AI systems that can create content like essays, blogs, images, math solutions, computer programs, etc.

-

Neural Networks

As the name suggests, neural or artificial neural networks are the backbone of deep learning algorithms. Deep learning is a subfield of machine learning that uses neural networks to mimic human brains & neural signaling.

Neural networks are much more capable than machine learning algorithms & approaches. Two key features of neural networks are automated feature extraction from data and the ability to handle large data sets. Generative pre-trained transformers are special neural networking systems that can analyze & generate content based on the kind of data they are trained on.

Transformers are specially designed neural networks that determine context from input data and identify underlying relationships in sequential or other data patterns. With the right training, transformer neural networks can write any essay on any topic, solve incredibly complex math & computer programs, conjure blogs, and much more.

No wonder the Internet is flooding with mentions of GPT, GPT-3, GPT- 4, and Chat GPT.

ChatGPT has been the flagship of AI proliferation in the last few years. According to Reuters, Chat GPT has set the record for the fastest-growing user base among any up & coming software platform. GPT is also the engine running online content-generating applications such as Jasper AI, Quillbot, and ContentBot.

But how exactly do the transformer neural networks mimic human writing?

How GPT generates superb essays?

Transformer neural network models comprise a neural network stack of multiple layers.

- As mentioned, transformers are specialized neural networks that can understand the context, relationships, and topic of any data it operates on. These networks use an advanced & and ever-evolving set of mathematical & statistical techniques known as self-attention to analyze and detect diverse data parameters.

- Transformers are powerful enough to identify relationships between distant elements in a data stream and how they relate & influence each other.

- In essays & similar language-based content, words represent data. Transformers in GPT can determine how words associate with each other, how they relate to other words or strings of words (sentences & paragraphs), and what effect they have on other aspects of the text.

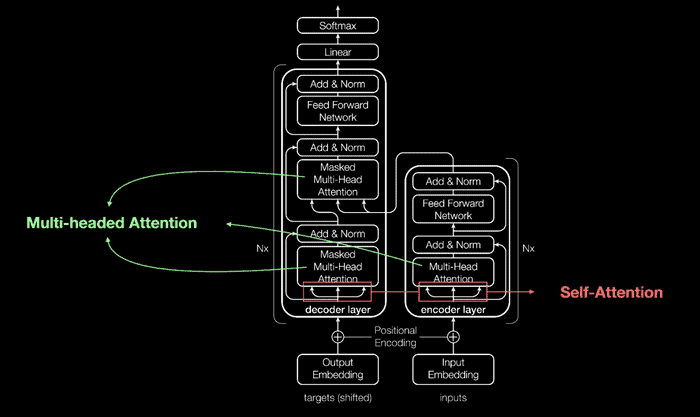

Below is what a transformer neural network model looks like.

Source: www.nvidia.com

On the right is the encoder section of the transformer. It goes through a self-multi-headed attention sub-layer and a Feed-Forward Network sub-layer. The right-hand side is the decoder side, which has two multi-headed attention sub-layers & a feed-forward neural network.

The attention mechanism carries out a word-to-word operation. It involves the system interacting with input data in sequence and determining which input data should be given priority. By doing this, GPT determines nouns & named entities, contexts, concepts, and topics. When you enter an essay prompt in a GPT-based tool, it dissects and analyses every word concerning the other using the self-attention mechanism.

Here’s an example to elucidate the basics.

He kept chugging water from the tank to the pool until it was full.

When this sentence is input, positional encoders tag the different kinds of words. The tags determine the purpose of the words, and the self-attention mechanism follows these tags to run vector dot product operations between all word vectors. In doing so, it finds the strongest relationships among all the words.

He kept chugging water from the tank to the pool until it was empty.

In both sentences, the word it refers to and means different things. Transformers decipher these contextual meanings to learn relationships between words. This IS the basic idea behind transformers and the GPT architecture. Words are tagged, and their meaning, relationships, & influence are determined to decipher context & overall meaning.

Transformer neural networks employ linear algebra, calculus, probability, number theory, and statistics to transform natural language representations and make them suitable for manipulation. If you wish to dig into them further, brush up on your maths, stats, and linguistics.

On the final leg of this article, let us take a quick look at three of the most popular GPT-powered AI essay writing tools.

3 Best AI Essay Writers That Use GPT

-

Rytr

Touted as the most accurate GPT-3 powered content generator, Rytr is the AI assistant who can help you write anything. You can generate high-quality content with this platform with essays, blogs, e-mails, marketing copies, and theses.

The pricing plans are quite affordable, starting from $9 per month. There’s even a FREE plan that allows you to generate 10000 characters every month.

-

Jasper AI

One of the most popular AI writing tools on the Web, Jasper AI can write essays and paraphrase text. It also checks for grammar and plagiarism. However, all these features come at a hefty price of $39 per month.

-

Textero AI

Another top-notch platform for generating essays for free, Textero is a great choice for students. While it can generate good content, it only generates top-notch content when you avail of the premium version. The content generated often has formatting, word choice, integrity, and mechanical issues.

With that, we wrap up this write-up. Hope this was an interesting read. If GPT, generative AI, and NLG interest you or you wish to create your own AI essay writer, enroll in an online AI course today.